Related: Architecting AI-Driven Workflows for Responsible AI in Enterprise Web Development

2026: Apex Logic's Blueprint for Architecting AI-Driven UI/UX in Serverless Enterprise Web Development

As Lead Cybersecurity & AI Architect at Apex Logic, I've witnessed the rapid convergence of artificial intelligence and serverless architectures reshape the landscape of enterprise web development. In 2026, this convergence is no longer a theoretical discussion but an urgent strategic imperative, particularly concerning UI/UX. Organizations that fail to proactively integrate these technologies risk falling behind in user experience, operational efficiency, and cost management. This article presents Apex Logic's blueprint for navigating this complex terrain, focusing on how AI-driven UI/UX can be responsibly architected within a serverless enterprise framework, leveraging FinOps, GitOps, and open-source AI for optimal AI alignment, enhanced engineering productivity, and robust release automation.

The goal is clear: empower CTOs and lead engineers to build highly responsive, personalized, and cost-efficient user interfaces while upholding ethical AI principles. We must move beyond simple integration to a holistic strategy that encompasses architecture, operational excellence, and a deep understanding of trade-offs.

The Strategic Imperative: AI-Driven UI/UX in Serverless Enterprise

The demand for highly personalized and adaptive user experiences has never been greater. Traditional UI/UX paradigms, often static and labor-intensive, struggle to meet these expectations. This is where AI-driven capabilities become transformative, especially when coupled with the agility of serverless computing.

Redefining User Experience with Generative AI

Generative AI, in particular, is poised to revolutionize how users interact with applications. Imagine interfaces that dynamically adapt to user behavior, preferences, and context in real-time. This goes beyond simple personalization; it involves the AI actively generating or modifying UI components, content, and workflows to optimize engagement and task completion. For instance, an enterprise application could use an LLM (Large Language Model) to generate context-sensitive help documentation, suggest next best actions based on user history, or even dynamically re-arrange dashboard elements to prioritize critical information. Accessibility, too, can be dramatically enhanced, with AI generating alternative text for images, providing real-time language translation, or adapting visual elements for users with specific needs. This requires a robust backend, and serverless functions provide the elastic compute necessary to handle the bursty, often unpredictable, demands of AI inference.

Serverless as the Foundation for Agility and Scale

Serverless architectures offer a compelling foundation for modern UI/UX development. By abstracting away infrastructure management, development teams can focus on delivering features. For AI-driven UI/UX, this translates to:

- Micro-frontends: Serverless functions and services are ideal for hosting independent micro-frontends or UI components, each potentially incorporating its own AI model or inference logic. This enables independent deployment and scaling.

- Cost Efficiency (FinOps Synergy): Billing based on actual usage aligns perfectly with the often sporadic nature of AI inference requests. This inherent cost-effectiveness is a cornerstone of our FinOps strategy.

- Auto-scaling: Serverless platforms automatically scale resources up and down to match demand, ensuring high availability and responsiveness even during peak AI processing loads without manual intervention.

Architecting for Responsible AI Alignment and Engineering Productivity

Integrating AI, especially open-source AI, requires a deliberate architectural approach that prioritizes ethical considerations and operational efficiency. Responsible AI and AI alignment are not afterthoughts; they are foundational pillars for sustainable innovation.

Integrating Open-Source AI for UI/UX Innovation

The rapid advancements in open-source AI models (e.g., Hugging Face, PyTorch, TensorFlow) provide unparalleled opportunities for innovation without the prohibitive costs of proprietary solutions. For UI/UX, this might involve fine-tuning models for specific tasks like sentiment analysis from user feedback, predictive analytics for user paths, or generating UI copy. However, this also introduces challenges:

- Model Selection & Fine-tuning: Choosing the right pre-trained model and fine-tuning it with enterprise-specific data is crucial. This requires expertise in data engineering and machine learning operations (MLOps).

- Bias Mitigation: Open-source models, trained on vast public datasets, can inherit biases. Implementing bias detection and mitigation strategies (e.g., adversarial debiasing, fairness-aware training) is paramount to ensure responsible AI and prevent discriminatory UI outcomes.

- Ethical Guardrails: Establishing clear ethical guidelines for AI model usage, data handling, and user interaction is non-negotiable. Regular audits and human-in-the-loop mechanisms are vital for maintaining AI alignment.

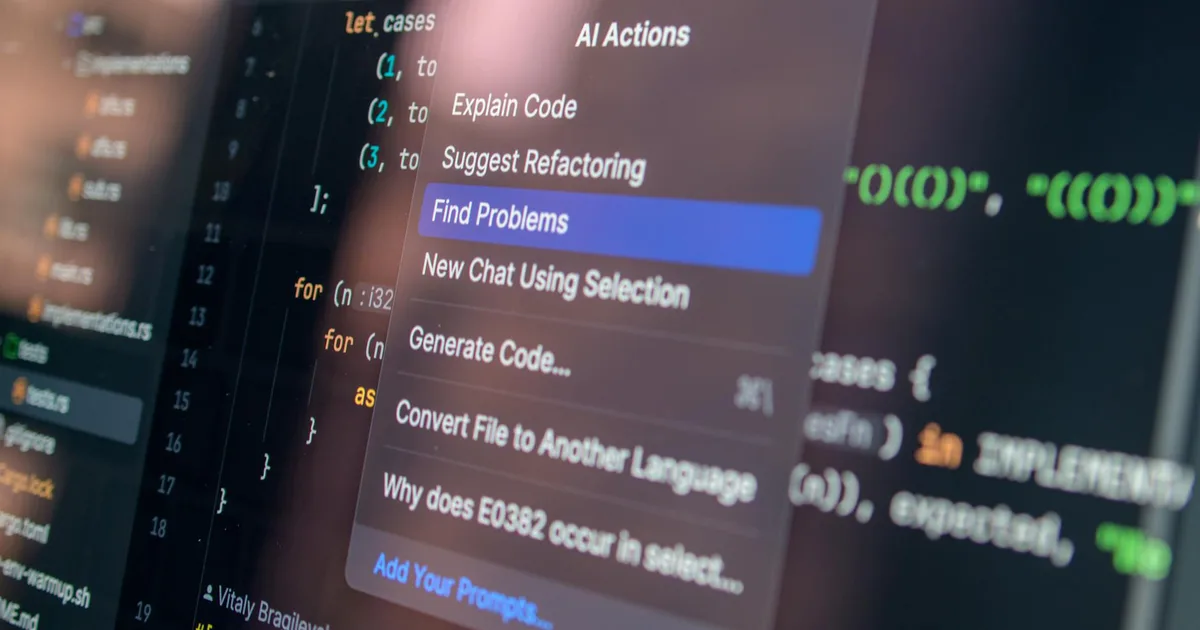

Consider a scenario where an AI dynamically generates product recommendations. An open-source AI model fine-tuned on internal product data could power this. The inference might occur via a serverless function, triggered by user interaction. Here's a conceptual code snippet demonstrating client-side invocation of a serverless AI endpoint:

// Client-side JavaScript for AI-driven recommendation widget

async function fetchRecommendations(userId, currentProduct) {

try {

const response = await fetch('/api/ai-recommendations', {

method: 'POST',

headers: {

'Content-Type': 'application/json',

},

body: JSON.stringify({ userId, currentProduct }),

});

if (!response.ok) {

throw new Error(`HTTP error! status: ${response.status}`);

}

const data = await response.json();

renderRecommendations(data.recommendations);

} catch (error) {

console.error('Error fetching recommendations:', error);

// Fallback to default recommendations or error message

renderDefaultRecommendations();

}

}FinOps: Optimizing Cloud Spend for AI Workloads

While serverless inherently offers cost benefits, AI-driven workloads can still incur significant expenses if not managed carefully. Our FinOps strategy for 2026 focuses on proactive cost optimization:

- Granular Monitoring: Implementing detailed monitoring of AI inference costs per function, per model, and per user segment. Tools like AWS Cost Explorer, Azure Cost Management, or Google Cloud Billing Reports, augmented with custom tags, are essential.

- Resource Right-Sizing: Continuously evaluating and adjusting memory, CPU, and GPU allocations for serverless functions running AI models. Over-provisioning is a common FinOps pitfall.

- Caching Strategies: Leveraging edge caching (e.g., CDN for UI assets, Lambda@Edge for AI inference results) to reduce redundant AI computations and minimize latency and costs.

- Spot Instances for Training: While inference often requires consistent availability, AI model training can often leverage spot instances or preemptible VMs for significant cost savings, integrating these into MLOps pipelines.

GitOps and Release Automation for Seamless Deployment

Achieving high engineering productivity and reliable deployments for complex AI-driven serverless UIs necessitates a robust operational framework. GitOps provides the declarative, version-controlled approach needed for this complexity, while release automation ensures speed and consistency.

GitOps for Declarative UI/UX Infrastructure

GitOps extends the principles of Git to operations, treating infrastructure and application configurations as code. For AI-driven micro-frontends and their associated serverless backends, this means:

- Single Source of Truth: Git repositories become the definitive source for all UI/UX configuration, serverless function definitions, API gateways, and even AI model deployment manifests.

- Automated Reconciliation: Tools like Argo CD or Flux CD continuously monitor the Git repository and ensure the actual state of the infrastructure matches the desired state declared in Git.

- Auditability and Rollbacks: Every change is version-controlled, providing a complete audit trail and enabling quick, reliable rollbacks to previous stable states. This is critical when deploying new AI model versions that might introduce unforeseen issues.

Here’s a simplified GitOps manifest snippet for deploying an AI-driven recommendation widget as a serverless micro-frontend:

# Example GitOps Manifest for a Serverless AI-Driven Micro-Frontend

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: ai-recommendation-widget

namespace: argocd

spec:

project: default

source:

repoURL: https://github.com/apex-logic/enterprise-ui-components.git

targetRevision: HEAD

path: apps/recommendation-widget

destination:

server: https://kubernetes.default.svc

namespace: ai-frontend

syncPolicy:

automated:

prune: true

selfHeal: true

syncOptions:

- CreateNamespace=true

retry:

limit: 5

backoff:

duration: 5s

factor: 2

maxDuration: 3mCI/CD Pipelines for AI-Enhanced Front-Ends

Robust CI/CD pipelines are the engine of release automation, ensuring that new AI-driven UI features and model updates are delivered quickly and reliably. Key components include:

- Automated Testing: Unit, integration, and end-to-end tests for both UI components and the underlying AI inference logic. This must include tests for AI model performance metrics and bias detection.

- Model Versioning and Registry: Integrating with an MLOps platform (e.g., MLflow, Sagemaker MLOps) to manage AI model versions, track lineage, and store metadata.

- Canary Deployments & A/B Testing: Deploying new AI models or UI features to a small subset of users first, monitoring performance and user feedback, before a full rollout. This minimizes risk and enables data-driven decision-making.

- Infrastructure as Code (IaC): Using tools like Terraform or AWS CloudFormation to define and manage the serverless infrastructure, ensuring consistency across environments.

This integrated approach significantly boosts engineering productivity by reducing manual errors and accelerating the feedback loop between development and production.

Navigating Trade-offs and Mitigating Failure Modes

No advanced architecture comes without its challenges. Understanding the inherent trade-offs and potential failure modes is crucial for building resilient AI-driven serverless enterprise applications.

Performance vs. AI Complexity

Integrating sophisticated AI models can introduce latency. A key trade-off lies in balancing the richness of the AI experience with user expectations for speed.

- Failure Mode: Slow UI responsiveness due to high AI inference latency.

- Mitigation: Implement edge computing for critical, low-latency AI inference (e.g., using Lambda@Edge or Cloudflare Workers AI). Pre-compute AI results where possible. Optimize model size and complexity. Consider client-side AI for simple tasks (e.g., form validation, basic recommendations) using WebAssembly or ONNX Runtime Web.

Data Privacy and Security Challenges

Leveraging user data for AI personalization, especially with open-source AI models, raises significant privacy and security concerns.

- Failure Mode: Data breaches, non-compliance with regulations (e.g., GDPR, CCPA), or unintended data leakage through AI models.

- Mitigation: Implement robust data anonymization and pseudonymization techniques. Ensure all data processed by serverless functions and AI models adheres to the principle of least privilege. Conduct regular security audits and penetration testing. Encrypt data at rest and in transit. Establish clear data governance policies for AI training and inference data.

Vendor Lock-in and Portability

While serverless offers many advantages, relying heavily on a single cloud provider's proprietary services can lead to vendor lock-in.

- Failure Mode: Difficulty migrating to another cloud provider or on-premises solution due to deep integration with specific platform services.

- Mitigation: Prioritize serverless functions and managed services that adhere to open standards or have analogous implementations across major cloud providers. Utilize containerization (e.g., AWS Fargate, Azure Container Apps) for AI workloads where possible to increase portability. Abstract infrastructure using IaC tools like Terraform.

Source Signals

- Gartner (2025): Predicts that by 2026, over 70% of new enterprise applications will incorporate AI-driven UI components for enhanced personalization.

- Cloud Native Computing Foundation (CNCF) (2024): Reports a 45% year-over-year increase in GitOps adoption for serverless deployments across enterprise organizations.

- AWS re:Invent (2025 Keynote): Highlighted a 30% average reduction in cloud spend for enterprises implementing FinOps best practices specifically for AI inference workloads on serverless.

- Hugging Face (2025 Report): Notes a 60% increase in enterprise contributions to and fine-tuning of open-source LLMs for domain-specific applications.

Technical FAQ

Q1: How do we ensure AI model updates in our serverless UI don't break existing user experiences?

A1: Implement a robust MLOps pipeline integrated with your GitOps flow. Utilize blue/green deployments or canary releases for AI models, routing a small percentage of traffic to the new model first. Monitor key performance indicators (KPIs) and user feedback rigorously. Automated rollback mechanisms, triggered by predefined error thresholds, are essential to quickly revert to a stable model version if issues arise.

Q2: What's the recommended strategy for managing dependencies and cold starts for AI-heavy serverless functions?

A2: For dependencies, use custom runtimes or container images for your serverless functions (e.g., AWS Lambda container images, Azure Functions custom handlers) to pre-package large AI libraries. Mitigate cold starts by provisioning concurrency (e.g., AWS Lambda Provisioned Concurrency, Azure Functions Premium Plan) for critical AI inference functions. Implement warm-up routines that periodically invoke functions to keep them active, especially during anticipated peak hours.

Q3: How can we measure the ROI of investing in AI-driven UI/UX, particularly regarding FinOps?

A3: ROI can be measured by tracking metrics such as increased user engagement, conversion rates, reduced customer support costs (due to more intuitive interfaces), and improved task completion rates. From a FinOps perspective, measure the direct cost savings from optimized serverless AI inference, reduced operational overhead in UI development (due to AI assistance), and the impact of AI-driven personalization on user retention and lifetime value, which directly contributes to revenue growth.

Conclusion

The journey to fully realize the potential of AI-driven UI/UX in serverless enterprise environments is complex but incredibly rewarding. At Apex Logic, we believe that by meticulously architecting with a focus on responsible AI, embracing FinOps for cost efficiency, and leveraging GitOps for unparalleled release automation and engineering productivity, organizations can build the next generation of user experiences. The blueprint outlined here provides a strategic roadmap for CTOs and lead engineers to navigate the opportunities and challenges of 2026 and beyond, ensuring ethical innovation and sustained competitive advantage through intelligent, adaptive, and efficient web development.

Comments