Related: Apex Logic's 2026 Strategy: AI FinOps & GitOps for Sovereign Control Planes

The Imperative for Verifiable Infrastructure Integrity in 2026

As Lead Cybersecurity & AI Architect at Apex Logic, I've witnessed firsthand the accelerating demand for verifiable trust and compliance in enterprise infrastructure, particularly as it pertains to hosting increasingly sophisticated AI workloads. In 2026:, the landscape for enterprises is defined by escalating regulatory pressures for responsible AI and stringent data governance. This necessitates a fundamental shift beyond traditional infrastructure management to an architecture where the integrity, provenance, and configuration of every component can be continuously attested. Our focus at Apex Logic is on pioneering an AI-driven FinOps GitOps architecture that provides this foundational trust, directly enhancing engineering productivity and release automation for critical AI systems.

Beyond Traditional Infrastructure as Code

While Infrastructure as Code (IaC) revolutionized provisioning, it often falls short in guaranteeing continuous compliance and verifiable integrity post-deployment. The challenge lies in drift detection, ensuring that the deployed state meticulously matches the desired state defined in code, and that no unauthorized changes have occurred. For AI workloads, where data sensitivity and model integrity are paramount, any deviation can have significant security, financial, and ethical ramifications. We need a mechanism that not only defines infrastructure but also continuously validates its adherence to policies, cost controls, and security baselines, with an immutable audit trail.

The Nexus of Responsible AI and Enterprise Infrastructure

The principles of responsible AI and AI alignment are not abstract concepts confined to model development; they must permeate the entire operational stack. An AI system's trustworthiness is intrinsically linked to the trustworthiness of its underlying infrastructure. If the infrastructure hosting the training data, model artifacts, or inference engines is compromised, misconfigured, or non-compliant, the integrity of the AI system itself is undermined. Our architectural approach ensures that compliance and security policies are baked into the infrastructure definitions from the ground up, providing verifiable evidence that AI systems operate within defined ethical and regulatory boundaries. This proactive stance is crucial for maintaining public trust and avoiding significant penalties in the evolving regulatory environment of 2026.

Architecting Apex Logic's AI-Driven FinOps GitOps Framework

Our proposed framework leverages GitOps as the operational paradigm, extending its reach with AI-driven insights and robust FinOps integration. This holistic approach ensures not just operational efficiency but also financial accountability and verifiable compliance across the enterprise.

Core Architectural Principles

- Git as the Single Source of Truth: All infrastructure, application, policy, and financial configuration is version-controlled in Git.

- Declarative Everything: Infrastructure and policies are described declaratively, enabling idempotent and auditable deployments.

- Continuous Reconciliation: Automated agents continuously compare the desired state (Git) with the actual state (production) and reconcile any deviations.

- AI-Driven Observability & Optimization: Machine learning models analyze operational data for anomaly detection, security threats, and cost optimization recommendations.

- Policy-as-Code: Security, compliance, and cost policies are defined as code and enforced automatically.

- Verifiable Attestation: Every change, deployment, and policy enforcement leaves an immutable, auditable trail.

Git as the Single Source of Truth for Everything

In our architecture, Git isn't just for code; it's the definitive record for infrastructure definitions (Terraform, Kubernetes manifests), operational policies (Open Policy Agent - OPA), security configurations, and even FinOps budgets and cost allocation rules. This centralization ensures that every change is reviewed, approved, and versioned, establishing a clear lineage and accountability. Changes to infrastructure are pull requests, triggering automated pipelines for validation, testing, and deployment. This paradigm significantly boosts release automation and reduces human error.

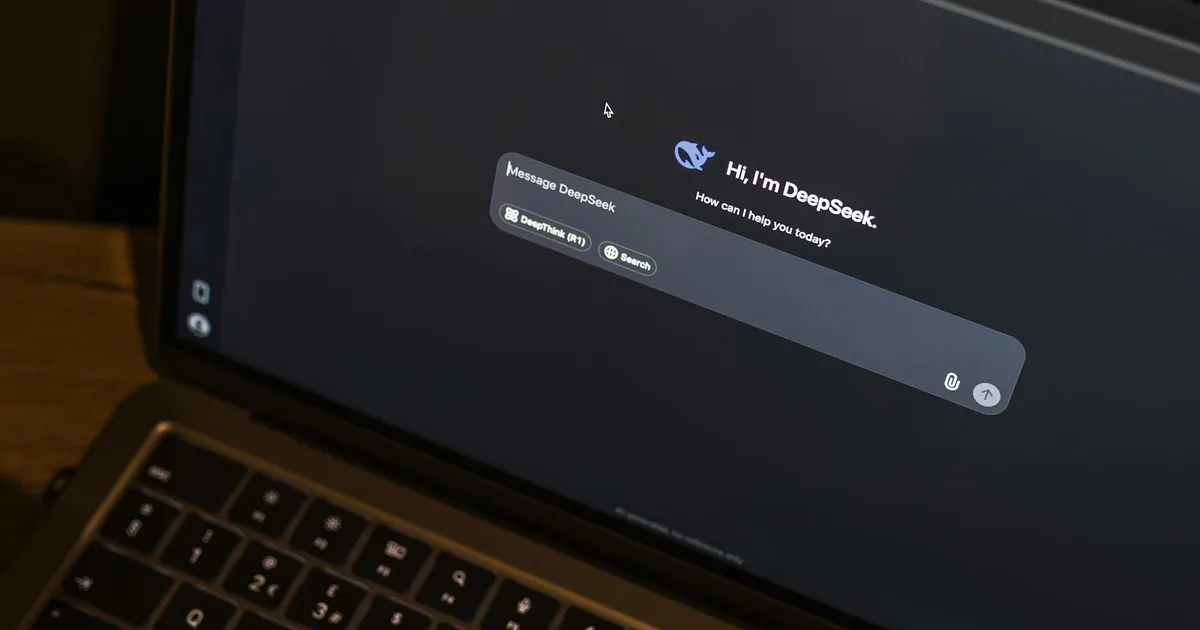

AI-Driven Observability and Anomaly Detection

Integrating AI into our GitOps workflows transforms reactive monitoring into proactive intelligence. ML models consume telemetry data from infrastructure, applications, and security logs, identifying patterns indicative of performance bottlenecks, security breaches, or cost inefficiencies. For instance, an AI model could detect anomalous resource consumption in a Kubernetes cluster that deviates from historical patterns, signaling a potential misconfiguration or attack. This AI-driven FinOps GitOps approach provides real-time insights that traditional rule-based systems often miss, enabling rapid response and continuous optimization.

Policy-as-Code and Compliance Pipelines

To uphold responsible AI and regulatory compliance, policies are encoded and enforced automatically. Tools like OPA allow us to define granular policies for resource provisioning, network configurations, data access, and cost ceilings. These policies are part of the Git repository, reviewed like any other code, and applied by GitOps controllers. This ensures that only compliant infrastructure can be provisioned and that any drift is immediately flagged and remediated, providing verifiable evidence for auditors that AI alignment principles are maintained from the infrastructure layer up.

Implementation Details and Practical Considerations

Implementing an AI-driven FinOps GitOps architecture requires a thoughtful selection and integration of cloud-native tools and practices.

Tooling Stack for AI-Driven FinOps GitOps

- Version Control: Git (GitHub, GitLab, Bitbucket) for all declarative configurations.

- GitOps Controllers: Argo CD or Flux for Kubernetes deployments, extended for broader infrastructure.

- IaC Tools: Terraform for cloud resource provisioning, Ansible for configuration management.

- Policy Enforcement: Open Policy Agent (OPA) for policy-as-Code across the stack.

- Observability: Prometheus/Grafana for metrics, Loki/ELK for logs, Jaeger for traces.

- AI/ML Platform: Cloud provider ML services (AWS SageMaker, Azure ML, GCP Vertex AI) or open-source (Kubeflow) for AI-driven insights.

- FinOps Tools: Cloud provider cost management APIs, custom dashboards, and specialized FinOps platforms integrated via APIs, often orchestrated by `serverless` functions.

- Security Scanning: Static Application Security Testing (SAST), Dynamic Application Security Testing (DAST), Container vulnerability scanning integrated into CI/CD.

Integrating FinOps for Cost Optimization

FinOps is not an afterthought; it's a core component. By treating cost policies as code within Git, we can enforce budgets, tag resources for accurate chargebacks, and implement optimization strategies automatically. AI models analyze spending patterns, identify idle resources, suggest optimal instance types, and predict future costs, feeding these recommendations back into the GitOps pipeline for automated adjustments. This proactive, AI-driven FinOps approach ensures that financial efficiency is intrinsically linked to infrastructure provisioning.

Code Example: Policy Enforcement with OPA and GitOps

Consider a simple OPA policy to ensure all Kubernetes deployments have resource limits defined, a critical aspect of stability and cost control. This Rego policy lives in Git alongside our Kubernetes manifests.

package kubernetes.admission.k8s_resource_limits_check deny[msg] { input.request.kind.kind == "Deployment" some i container := input.request.object.spec.template.spec.containers[i] not container.resources.limits.cpu msg := sprintf("Deployment '%v' container '%v' must have CPU limits defined.", [input.request.object.metadata.name, container.name]) } deny[msg] { input.request.kind.kind == "Deployment" some i container := input.request.object.spec.template.spec.containers[i] not container.resources.limits.memory msg := sprintf("Deployment '%v' container '%v' must have Memory limits defined.", [input.request.object.metadata.name, container.name]) }This policy, once committed to Git, would be picked up by an OPA Gatekeeper instance (or similar admission controller). Any attempt to deploy a Kubernetes Deployment without CPU or memory limits would be denied, ensuring compliance before resources are even provisioned. This is a direct example of how GitOps and policy-as-code combine to enforce integrity and support FinOps goals by preventing runaway costs from unconstrained resources.

Trade-offs and Challenges

While powerful, this architecture presents trade-offs. The initial investment in tooling, training, and cultural shift can be substantial. Complexity increases due to the integration of multiple systems and the need for specialized skills in cloud-native, AI/ML, and policy engineering. Data privacy and ethical considerations for AI models used in security and FinOps also require careful management. Organizations must be prepared for a steep learning curve and commit to continuous improvement.

Ensuring Resilience: Failure Modes and Mitigation Strategies

A robust architecture must anticipate and mitigate potential failure modes to maintain continuous verifiable integrity.

Git Repository Compromise

If the central Git repository is compromised, the single source of truth becomes a single point of failure. Mitigation includes stringent access controls (MFA, least privilege), Git signing (GPG), branch protection rules, immutable audit logs, and regular backups. Furthermore, GitOps controllers should be configured to prevent changes if the commit signature is invalid or if the committer is not authorized.

AI Model Drift/Bias in FinOps/Security

AI models used for anomaly detection or cost optimization can drift over time or exhibit bias, leading to false positives, missed threats, or suboptimal financial recommendations. Mitigation requires continuous model monitoring, retraining with fresh data, A/B testing of new model versions, and a human-in-the-loop validation process for critical decisions. Explainable AI (XAI) techniques are crucial to understand model decisions and build trust.

Policy Overreach or Misconfiguration

Incorrectly defined policies can inadvertently block legitimate operations or create security gaps. Mitigation strategies include rigorous testing of policies in staging environments, gradual rollout strategies (e.g., initially in 'audit' mode before 'enforce'), versioning of policies in Git, and automated static analysis of policy code (e.g., Rego linting). Rollback capabilities are essential to quickly revert problematic policies.

Vendor Lock-in (Cloud/Tooling)

Heavy reliance on specific cloud provider services or proprietary tools can lead to vendor lock-in. Mitigation involves designing with abstraction layers (e.g., Terraform modules, Kubernetes), favoring open-source tools where viable, and maintaining a multi-cloud or hybrid-cloud strategy where appropriate. This flexibility is key to long-term adaptability and cost control.

Source Signals

- Gartner: Predicts that by 2027, 75% of organizations will have implemented FinOps practices, up from 25% in 2022, driven by cloud cost optimization.

- NIST: Their AI Risk Management Framework (AI RMF) emphasizes the need for robust governance and verifiable integrity across the AI lifecycle, including underlying infrastructure.

- CNCF: Reports significant adoption of GitOps, with 80% of organizations using or planning to use GitOps for managing Kubernetes and cloud-native infrastructure.

- Forrester: Highlights that enterprises adopting mature FinOps practices achieve 15-20% cloud cost savings within the first year.

Technical FAQ

Q1: How does this architecture specifically address 'verifiable enterprise infrastructure integrity' beyond standard IaC?

A1: Standard IaC defines the desired state. Our architecture adds continuous, automated reconciliation via GitOps controllers that detect and remediate drift. Crucially, Policy-as-Code (e.g., OPA) enforces security and compliance rules at every stage, from CI/CD to runtime admission control, creating an immutable, auditable trail in Git. This provides cryptographic proof of configuration provenance and adherence, enabling verifiable attestation for auditors.

Q2: What's the role of 'serverless' functions in this AI-driven FinOps GitOps framework?

A2: Serverless functions are instrumental for event-driven automation and integration. They can trigger GitOps reconciliation loops based on external events, implement custom FinOps cost-optimization actions (e.g., rightsizing instances based on AI recommendations), or act as webhooks for security alerts. Their ephemeral, scalable nature makes them ideal for extending the automated capabilities of the GitOps pipeline without maintaining dedicated compute resources.

Q3: How do you prevent AI models used for FinOps or security from becoming a new attack vector or source of bias?

A3: Preventing this requires a multi-faceted approach. First, data used for training AI models must be securely sourced, anonymized where necessary, and continuously validated for integrity. Second, the models themselves must be regularly monitored for drift and bias using explainability techniques (XAI) and performance metrics. Third, critical decisions made by AI should always have a human-in-the-loop for oversight and override capabilities. Finally, the entire MLOps pipeline for these models must itself be governed by GitOps principles, ensuring version control, policy enforcement, and auditability.

In 2026:, the convergence of AI, cloud-native principles, and stringent regulatory demands presents both challenges and unparalleled opportunities. By embracing an AI-driven FinOps GitOps architecture, organizations like those partnered with Apex Logic can not only meet these demands but also establish a competitive advantage through superior engineering productivity, robust security, and unwavering commitment to responsible AI and AI alignment. This blueprint for architecting verifiable enterprise infrastructure integrity is not merely an operational upgrade; it's a strategic imperative for the future of intelligent enterprise.

Comments