Related: 2026: AI-Driven FinOps & GitOps for Proactive AI Drift Remediation

The Imperative for Verifiable AI Alignment and Responsible AI Governance in 2026

As Lead Cybersecurity & AI Architect at Apex Logic, I've witnessed the rapid acceleration of AI adoption within the enterprise, particularly in regulated sectors. The year 2026 marks a critical inflection point where the mere deployment of AI models is no longer sufficient. Enterprises face an urgent and intensifying need to demonstrate verifiable AI alignment and adhere rigorously to responsible AI principles. This mandate stems from evolving global regulations, increasing stakeholder scrutiny, and the inherent risks associated with custom AI models operating within sensitive, regulated workflows. The challenge is not just about building performative AI, but about building transparent, auditable, and accountable AI.

Evolving Regulatory Landscape and Enterprise Demands

The regulatory landscape is rapidly solidifying, with frameworks like the EU AI Act, NIST AI Risk Management Framework, and various industry-specific guidelines (e.g., financial services, healthcare) demanding unprecedented levels of transparency and control over AI systems. For CTOs and lead engineers, this translates into a non-negotiable requirement for robust governance throughout the entire AI model lifecycle. Failure to comply can result in significant financial penalties, reputational damage, and loss of market trust. This necessitates a proactive, architectural approach to embed compliance and ethics from conception to retirement of every AI artifact. Apex Logic believes that the traditional approaches to MLOps, while valuable, often fall short in providing the continuous, verifiable assurances demanded by these new regulations.

Challenges in Custom AI Model Lifecycle Management

The complexity of managing custom AI models—from data ingestion and feature engineering to model training, deployment, monitoring, and retraining—introduces numerous challenges. These include:

- Lack of Reproducibility: Difficulty in recreating specific model versions, training environments, and data states for audit purposes.

- Opaque Decision-Making: The 'black box' problem, hindering explainability and interpretability, crucial for regulatory compliance.

- Inconsistent Governance: Ad-hoc policy enforcement across different teams or models, leading to compliance gaps.

- Cost Invisibility: Difficulty in attributing compute, storage, and tooling costs directly to specific responsible AI initiatives or compliance mandates.

- Manual Audit Trails: Labor-intensive processes for generating compliance reports, prone to errors and delays.

These challenges underscore the need for a paradigm shift in how we architect and manage AI systems, moving towards an AI-driven FinOps & GitOps framework that integrates governance, cost management, and operational excellence at its core.

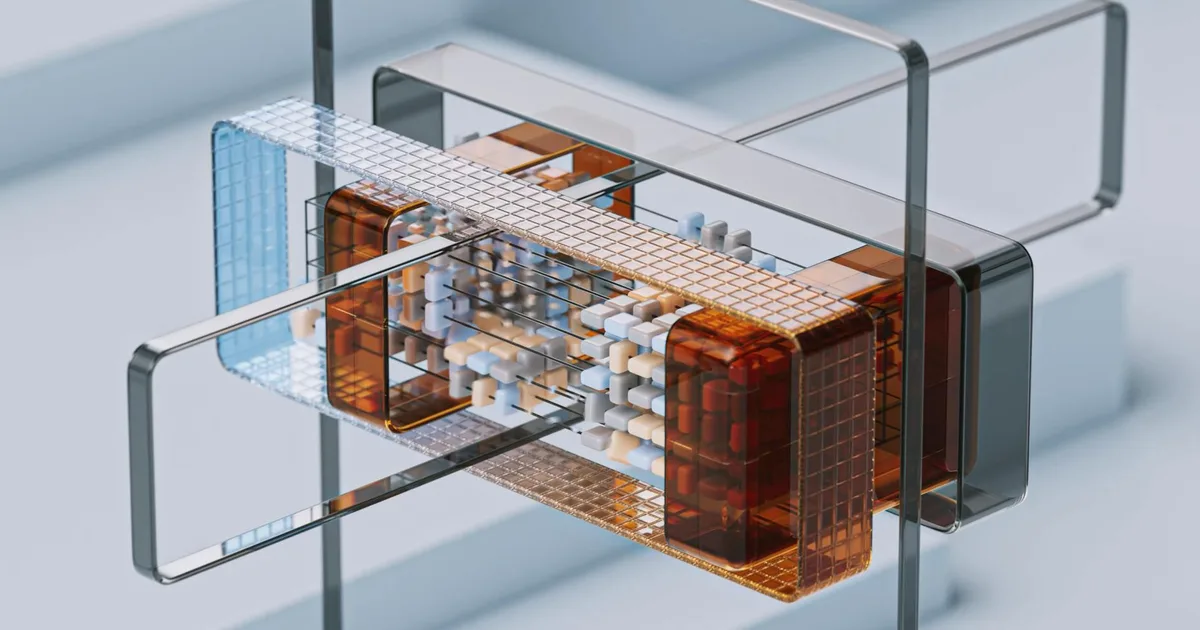

Architecting AI-Driven FinOps & GitOps for the AI Lifecycle

Our vision at Apex Logic for 2026 is to provide a comprehensive architectural blueprint that extends the proven principles of GitOps and FinOps beyond infrastructure to encompass the entire AI model lifecycle. This integrated approach is designed to deliver continuous explainability, auditability, and compliance, significantly boosting engineering productivity and enabling automated release automation of auditable AI systems.

GitOps for Declarative AI Governance and Model Versioning

GitOps, at its heart, is about using Git as the single source of truth for declarative infrastructure and application configuration. We extend this philosophy to AI governance by treating all aspects of the AI lifecycle—model code, training data manifests, inference configurations, monitoring policies, and crucially, responsible AI governance policies—as version-controlled artifacts within Git repositories. This 'AI-as-Code' approach ensures:

- Declarative Governance: Policies for model validation (e.g., bias detection thresholds, explainability report generation), deployment gates (e.g., requiring specific fairness metrics), and data lineage are defined as code.

- Auditability and Reproducibility: Every change, approval, and deployment is tracked in Git, providing an immutable audit trail. Any model version, along with its associated data and policies, can be precisely reproduced.

- Automated Reconciliation: GitOps agents continuously observe the desired state (in Git) and the actual state (in production), automatically remediating any drift.

Consider a practical example of a declarative policy using Open Policy Agent (OPA) for a model deployment within a Kubernetes environment, enforced via a GitOps pipeline:

apiVersion: policy.apexlogic.com/v1alpha1

kind: ModelDeploymentPolicy

metadata:

name: high-risk-model-policy

spec:

modelName: "fraud-detection-v2"

riskLevel: "high"

requirements:

- type: "ExplainabilityReport"

minScore: 0.85 # Requires LIME/SHAP score above 0.85

format: "PDF"

- type: "BiasDetection"

maxDisparity: 0.05 # Max acceptable disparity for protected attributes

metric: "DemographicParity"

- type: "DataDriftMonitoring"

threshold: 0.1 # Max acceptable PSI (Population Stability Index)

alertTarget: "slack://#ai-ops-alerts"

approvalWorkflow:

groups: ["ai-ethics-committee", "legal-compliance"]

minApprovals: 2

This YAML manifest, stored in Git, declares that any deployment of fraud-detection-v2 must meet specific explainability, bias, and data drift criteria, and requires explicit approval from the AI Ethics Committee and Legal/Compliance teams. The GitOps controller, integrated with CI/CD, would enforce these gates before promoting the model to production.

FinOps for Cost and Compliance Observability of Responsible AI

FinOps, traditionally focused on cloud cost management, is extended by Apex Logic to provide granular visibility into the financial implications of maintaining responsible AI and achieving AI alignment. This goes beyond basic infrastructure costs to encompass:

- Responsible AI Cost Attribution: Tracking compute and storage costs associated with explainability tooling, adversarial testing frameworks, fairness audits, continuous monitoring for drift, and regulatory reporting.

- Compliance Cost Metrics: Quantifying the cost of maintaining specific compliance artifacts (e.g., generating audit logs, maintaining data provenance, running privacy-preserving computations).

- Anomaly Detection for Compliance & Cost: Identifying unexpected spikes in resource consumption that could indicate inefficient responsible AI practices or, conversely, a lack of necessary monitoring.

- ROI of Responsible AI: Providing data to demonstrate the return on investment for responsible AI initiatives by linking compliance costs to reduced risk and improved trust.

For instance, if a model requires extensive LIME/SHAP explanations for every inference in a regulated workflow, FinOps would track the additional GPU/CPU consumption for these computations. If a particular bias mitigation technique requires retraining on a larger, augmented dataset, FinOps would highlight the increased storage and training costs. This granular visibility allows organizations to optimize their responsible AI spending while ensuring compliance. The adoption of serverless architectures for inference and monitoring can further optimize costs, which FinOps tools can effectively track and analyze.

The Converged Architecture: An Apex Logic Perspective

The combined AI-driven FinOps GitOps architecture envisions a robust feedback loop:

- Git as SSOTr: All AI assets (code, data schemas, model artifacts, governance policies, deployment manifests) are versioned in Git.

- CI/CD Pipelines: Triggered by Git commits, these pipelines automate model building, testing (including responsible AI checks like bias detection, explainability generation), and packaging. Policy engines (e.g., OPA) enforce governance policies defined in Git.

- GitOps Controllers: Continuously reconcile the desired state (from Git) with the actual state in the production environment (e.g., Kubernetes, serverless platforms), deploying models and their associated monitoring/governance infrastructure.

- Observability & Monitoring: Deployed models are continuously monitored for performance, data drift, concept drift, and adherence to responsible AI metrics.

- FinOps & Compliance Dashboards: Telemetry from monitoring tools, cloud providers, and MLOps platforms is fed into FinOps tools. These tools correlate resource consumption with specific AI models and responsible AI activities, providing real-time cost and compliance insights.

- Feedback Loop: Insights from FinOps (e.g., high cost of a specific explainability method) and monitoring (e.g., model drift) feed back into the Git repository, prompting updates to model configurations, data pipelines, or governance policies, thus closing the loop and enabling continuous improvement and automated release automation.

Implementation Strategies, Trade-offs, and Failure Modes

Adopting an AI-driven FinOps GitOps framework requires careful planning and execution, especially in large enterprise environments.

Phased Adoption and Toolchain Integration

A 'big bang' approach is rarely successful. We advocate for a phased adoption, starting with critical, high-risk AI models or new greenfield projects. This allows teams to iterate, learn, and refine their processes. Key integration points include:

- MLOps Platforms: Integrating with existing platforms like Kubeflow, MLflow, or SageMaker for model lifecycle management.

- Policy Engines: Leveraging Open Policy Agent (OPA), Kyverno, or custom policy engines for declarative governance.

- Cloud Cost Management Tools: Utilizing native cloud tools (AWS Cost Explorer, Azure Cost Management, GCP Cost Management) augmented with third-party FinOps solutions.

- Observability Stacks: Integrating with Prometheus, Grafana, ELK stack, Datadog, or New Relic for comprehensive monitoring.

Trade-offs:

- Greenfield vs. Brownfield: Implementing this framework on greenfield projects is simpler due to fewer legacy constraints. Brownfield integration requires significant effort to refactor existing pipelines, migrate models, and standardize governance, but offers greater long-term ROI by bringing legacy systems into compliance.

- Granularity vs. Overhead: Achieving extremely granular cost attribution for every responsible AI activity can introduce significant instrumentation overhead. A pragmatic approach involves identifying key cost drivers and compliance metrics to focus on initially.

Mitigating Common Failure Modes

Even with a robust architecture, several failure modes can undermine the effectiveness of an AI-driven FinOps GitOps strategy:

- Policy Drift: Governance policies defined in Git become outdated or are bypassed in practice. Mitigation: Regular policy reviews, automated policy validation, and strong cultural emphasis on 'Git as the Source of Truth.'

- Data Drift & Concept Drift: Changes in input data distribution or the underlying relationship between features and targets lead to model performance degradation and potential compliance issues (e.g., increased bias). Mitigation: Robust data monitoring, automated alerts, and a GitOps-driven retraining pipeline.

- Cost Sprawl & Inefficient Responsible AI: Uncontrolled spending on AI experiments, underutilized compute resources, or inefficient responsible AI tooling. Mitigation: Continuous FinOps monitoring, cost allocation tags, budget alerts, and optimization recommendations.

- Compliance Gaps & Audit Fatigue: Incomplete audit trails, missing explainability artifacts, or overwhelming manual effort for audits. Mitigation: Automated generation and versioning of all compliance artifacts via GitOps, integrated reporting dashboards.

- Alert Fatigue: Overwhelming number of alerts from monitoring systems, leading to critical issues being missed. Mitigation: Intelligent alert correlation, anomaly detection, and a tiered alerting strategy.

Source Signals

- Gartner: Predicts that by 2026, 80% of enterprises will have adopted AI governance frameworks, highlighting the urgency for structured approaches.

- European Commission (EU AI Act): Emphasizes strict requirements for high-risk AI systems regarding transparency, human oversight, and robustness, directly driving the need for verifiable AI alignment.

- NIST AI Risk Management Framework: Provides a voluntary framework for managing risks associated with AI, advocating for comprehensive governance and risk assessment practices.

- OpenAI: Their research consistently underscores the importance of interpretability and control in advanced AI systems, influencing industry best practices for responsible AI.

- FinOps Foundation: Continues to expand its guidance on cost optimization and financial accountability in cloud environments, with increasing focus on AI/ML workloads.

Technical FAQ

Q1: How does GitOps specifically enhance AI model explainability and auditability?

A1: GitOps enhances explainability by versioning not just the model code, but also the explainability reports (e.g., LIME, SHAP outputs), feature importance metrics, and the policies that mandate their generation. For auditability, every change to these artifacts, along with deployment configurations and data lineage, is tracked in Git, providing a complete, immutable history that auditors can easily verify.

Q2: What is the primary challenge in integrating FinOps with AI governance, and how does Apex Logic address it?

A2: The primary challenge is accurately attributing costs to specific responsible AI practices and demonstrating their ROI. For example, quantifying the cost of bias detection runs or explainability report generation. Apex Logic addresses this by integrating FinOps tools directly with MLOps platforms and cloud resource tagging strategies, allowing for granular cost allocation to specific responsible AI activities, models, and teams. This provides detailed insights into where resources are being consumed for governance, enabling optimization.

Q3: Can this AI-driven FinOps & GitOps framework be applied to existing, in-production AI systems, or is it only suitable for new deployments?

A3: While easier to implement on new, greenfield AI projects, the framework is designed to be incrementally adoptable for existing in-production AI systems. Organizations can start by externalizing existing governance policies into Git (policy-as-code), integrating GitOps controllers for critical model updates, and instrumenting existing models for FinOps observability. This phased approach allows for gradual modernization and compliance without requiring a complete overhaul.

Conclusion

The convergence of AI-driven FinOps & GitOps represents the future of responsible AI governance and operational excellence in the enterprise. By architecting a unified framework that leverages declarative management for AI lifecycles and provides granular financial and compliance observability, organizations can confidently navigate the complex regulatory landscape of 2026 and beyond. This approach not only ensures verifiable AI alignment and robust responsible AI practices but also delivers tangible benefits in terms of improved engineering productivity, accelerated release automation, and ultimately, enhanced trust in AI systems. At Apex Logic, we are committed to empowering CTOs and lead engineers with the blueprints and tools necessary to build this future.

Comments