Related: Architecting Resilient AI-Driven FinOps GitOps at Apex Logic in 2026

The Imperative: Proactive Security for Multimodal AI in 2026

As Lead Cybersecurity & AI Architect at Apex Logic, I've witnessed firsthand the profound impact and inherent complexities introduced by the rapid adoption of multimodal AI systems. In 2026, these sophisticated deployments, integrating capabilities across text, image, audio, and more, are no longer theoretical; they are foundational to competitive advantage. However, this innovation ushers in a new era of cybersecurity challenges, creating complex attack surfaces and novel vulnerabilities that traditional security paradigms struggle to address. The urgency to integrate robust cybersecurity practices directly into the AI development and deployment lifecycle is paramount.

Our strategic imperative at Apex Logic is to establish an AI-driven FinOps GitOps architecture. This framework is not merely an aggregation of tools but a systematic, integrated approach designed to proactively identify, model, and remediate threats specific to responsible multimodal AI. Our objective is clear: ensure AI alignment and uphold responsible AI principles throughout the system's operational lifecycle, while leveraging the declarative power of GitOps and the analytical prowess of AI-driven automation for enhanced engineering productivity and streamlined release automation. This article delves into the architectural blueprint, implementation details, inherent trade-offs, and critical failure modes of such a system, targeting CTOs and lead engineers grappling with these very challenges.

The Evolving Threat Landscape of Multimodal AI in 2026

The convergence of diverse data types and models in multimodal AI creates an attack surface far more intricate than its unimodal predecessors. Understanding these unique vectors is the first step in architecting an effective defense.

Unique Attack Surfaces and Vulnerabilities

Traditional threats persist, but multimodal AI introduces new dimensions:

- Cross-Modal Adversarial Attacks: An attacker might inject subtle, imperceptible perturbations into one modality (e.g., an audio track) that, when processed alongside another (e.g., video), biases the AI's decision-making in an unforeseen way. This could lead to misclassification, denial of service, or even data exfiltration.

- Data Poisoning at Scale: Corrupting training data for a single modality has always been a concern. With multimodal systems, poisoning one data stream can have cascading, unpredictable effects across interconnected models, leading to systemic bias or critical vulnerabilities that are hard to trace.

- Prompt Injection and Modality Confusion: Beyond text-based prompt injection, imagine an image or audio prompt designed to bypass safety filters or extract sensitive information, exploiting the AI's interpretation across modalities.

- Model Inversion and Reconstruction: The ability to reconstruct sensitive training data from model outputs is amplified when multiple data types are involved, increasing the risk of privacy breaches.

- Supply Chain Risks for Foundation Models: The reliance on third-party foundation models introduces inherent trust issues. Verifying the integrity and security of these pre-trained components, especially when fine-tuned for multimodal tasks, is a monumental task.

Operational Complexity and Compliance

Managing the security posture of distributed multimodal AI deployments across heterogeneous environments (on-prem, multi-cloud, edge) significantly amplifies operational complexity. Regulatory bodies are rapidly evolving their stances on AI governance and data privacy, particularly concerning sensitive multimodal data. Ensuring continuous compliance, auditability, and adherence to responsible AI principles becomes a non-negotiable requirement, directly impacting FinOps considerations for security tooling and operational overhead.

Architecting an AI-Driven FinOps GitOps Framework for Multimodal AI Security

Our proposed architecture at Apex Logic integrates declarative infrastructure, AI-powered threat intelligence, and cost management to create a resilient, self-healing security posture for multimodal AI.

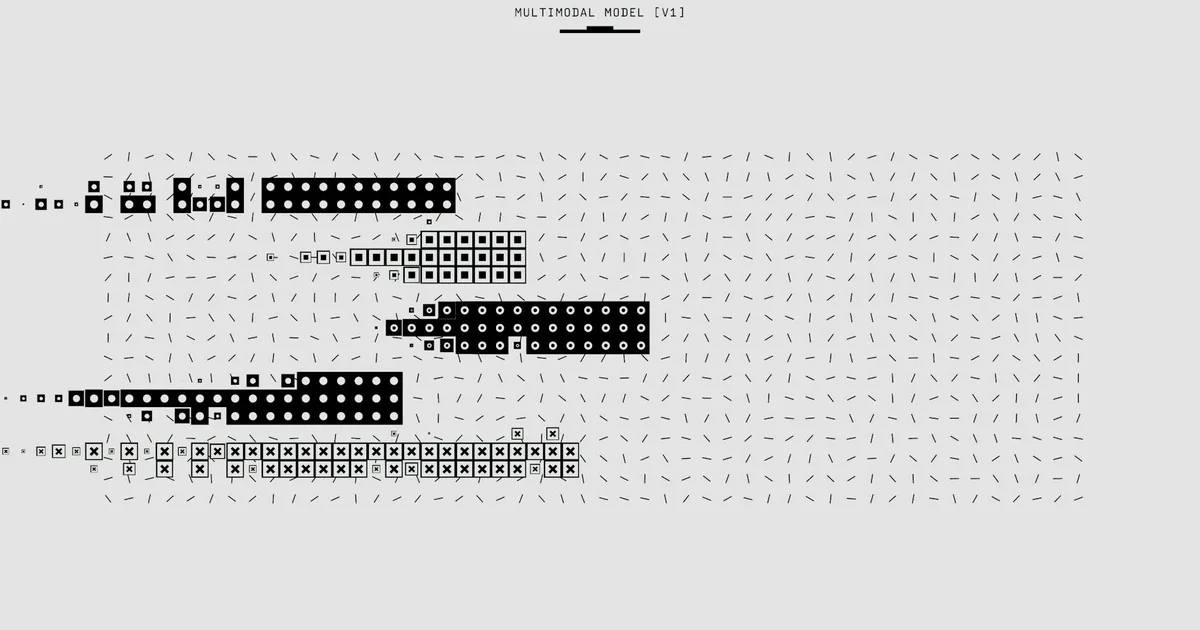

Core Components and Integration

GitOps Foundation: The bedrock of our architecture. Kubernetes, managed by tools like ArgoCD or Flux, serves as the orchestration layer. All infrastructure (IaC via Terraform/Pulumi), application configurations, and security policies (e.g., network policies, RBAC, OPA/Kyverno rules) are defined as code in Git repositories. This enables true release automation, ensuring state consistency and providing an auditable trail for every change. This declarative approach is fundamental to boosting engineering productivity.

AI-Driven Threat Intelligence & Modeling Platform: This is the brain of our security operations. It comprises:

- Anomaly Detection Engines: ML models (e.g., deep learning for time-series analysis) analyzing runtime behavior, network traffic (flow logs), system logs (Kubernetes audit logs, cloud trail), and model inference logs for deviations indicative of attacks specific to multimodal AI components.

- Vulnerability Prediction Models: AI trained on historical CVEs, code patterns, architectural designs, and static/dynamic analysis tool outputs (e.g., SonarQube, Snyk) to predict potential vulnerabilities in new code or model deployments.

- Dynamic Threat Graph Generation: A knowledge graph (e.g., powered by Neo4j) representing the interdependencies of AI models, data sources, APIs, and infrastructure, dynamically updated by AI (e.g., using Graph Neural Networks) to identify potential attack paths and blast radii.

- Adversarial Attack Detection & Mitigation: Specialized models designed to identify subtle adversarial perturbations in input data across modalities (e.g., using robust feature extraction, input sanitization) before they impact the AI model's output, potentially leveraging techniques like adversarial training.

FinOps Integration: Security is a cost center, but an essential one. Our AI-driven FinOps GitOps architecture embeds cost visibility and optimization into every security decision. Automated tagging, resource allocation policies, and cost attribution (e.g., via Kubecost, Cloudability) are enforced via GitOps. The AI platform helps optimize security spending by prioritizing remediation efforts based on risk and potential financial impact, and by suggesting cost-effective security controls and resource scaling for security tools.

Responsible AI Guardrails: Integrated directly into the security pipeline, these components ensure AI alignment. This includes Explainable AI (XAI) modules (e.g., LIME, SHAP) that provide transparency into the security AI's decisions, bias detection (e.g., IBM AI Fairness 360) in both the primary multimodal AI and the security AI itself, and mechanisms for human oversight and intervention, ensuring ethical AI deployment.

Operationalizing the Architecture: Workflow, Remediation, and FinOps Integration

The workflow is a continuous loop, shifting security left in the development process and ensuring continuous protection.

Workflow: From Code to Remediation

- DevSecOps Pipeline: Developers commit code and model artifacts to Git. CI/CD pipelines (e.g., GitLab CI, GitHub Actions, Jenkins) automatically trigger static application security testing (SAST with Checkmarx/Sonarqube), dynamic application security testing (DAST with OWASP ZAP), software composition analysis (SCA with Snyk/Dependency-Track), and container image scanning (with Clair/Aqua Security).

- AI-Driven Threat Modeling: Post-build, the AI Threat Intelligence platform analyzes the new code, model artifacts, infrastructure changes, and the outputs from various security tools. It uses its dynamic threat graph and prediction models to identify potential vulnerabilities, misconfigurations, and novel attack vectors specific to the multimodal AI context. This includes simulating cross-modal attacks and predicting their impact.

- Policy Enforcement & Remediation: Identified threats are correlated with predefined security policies (policy-as-code, managed via Git and enforced by OPA/Kyverno). If a violation or high-risk threat is detected, the system can:

- Automatically generate a remediation pull request (e.g., updating a Kubernetes network policy, patching a vulnerable dependency, suggesting a model re-training with debiased data, or hardening a container image).

- Alert security teams with high-fidelity, AI-contextualized alerts, including potential financial impact from the FinOps module.

- Trigger automated rollback via GitOps if a critical threat is deployed or if a security gate fails.

- Continuous Monitoring & Anomaly Detection: Post-deployment, the AI platform continuously monitors runtime behavior, data flows, model performance, and resource consumption for anomalies. Any deviation triggers alerts and potential automated remediation actions, with FinOps providing real-time cost implications of security incidents.

Practical Code Example: GitOps with AI-Driven Security Hooks

Here’s an illustrative YAML snippet for an ArgoCD Application, demonstrating how AI-driven security checks are integrated as hooks within a GitOps deployment pipeline for a multimodal AI service. This exemplifies proactive threat modeling before synchronization and AI alignment validation post-deployment.

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: multimodal-ai-service

namespace: argocd

spec:

project: default

source:

repoURL: https://github.com/apexlogic/multimodal-ai-repo.git

targetRevision: HEAD

path: kubernetes/prod

destination:

server: https://kubernetes.default.svc

namespace: prod-ai

syncPolicy:

automated:

prune: true

selfHeal: true

syncOptions:

- CreateNamespace=true

hooks:

- name: pre-sync-ai-threat-scan

hook: PreSync

command: [

Comments