Related: 2026: Apex Logic's Blueprint for AI-Driven FinOps GitOps Architecture

The 2026 Imperative: Verifiable Responsible Multimodal AI Alignment

In 2026, the pervasive integration of multimodal AI into Software-as-a-Service (SaaS) offerings presents both unparalleled opportunities and profound challenges. For Apex Logic, ensuring verifiable responsible AI alignment is no longer a mere technical aspiration but a critical business imperative. The stakes are immense: brand reputation, regulatory compliance, customer trust, and ultimately, market share hinge on our ability to not only deploy cutting-edge multimodal AI but to do so with demonstrable ethical rigor and financial prudence. This necessitates a paradigm shift in how we approach SaaS release automation, moving towards an AI-driven FinOps GitOps architecture.

Evolving Risks of Multimodal AI in SaaS

The complexity of multimodal AI models – processing and correlating data from text, image, audio, and video – introduces a new echelon of risks. Bias amplification, subtle hallucinations, data leakage across modalities, and the inherent 'black box' nature of deep learning models can lead to unpredictable, and potentially damaging, outcomes. Traditional governance models struggle to keep pace. For instance, a multimodal AI assisting in medical diagnostics could inadvertently learn and propagate biases from training data, leading to disparate outcomes based on patient demographics embedded in imagery or voice. Similarly, an AI-powered content generation tool might inadvertently create offensive material by misinterpreting contextual cues across modalities. The challenge is not just identifying these issues but preventing them at scale, across hundreds or thousands of rapid deployments characteristic of modern SaaS release automation.

Beyond Model Cards: Operationalizing Responsible AI

While model cards and ethical guidelines are foundational, they are insufficient for the dynamic, high-velocity environment of SaaS development in 2026. Responsible AI must be operationalized, embedded directly into the development and deployment lifecycle. This means moving beyond static documentation to dynamic, automated enforcement and continuous verification. We need systems that can monitor multimodal AI behavior in production, detect deviations from desired ethical benchmarks, and trigger automated remediation. This operationalization directly contributes to customer trust and regulatory compliance, transforming responsible AI from a compliance burden into a competitive differentiator.

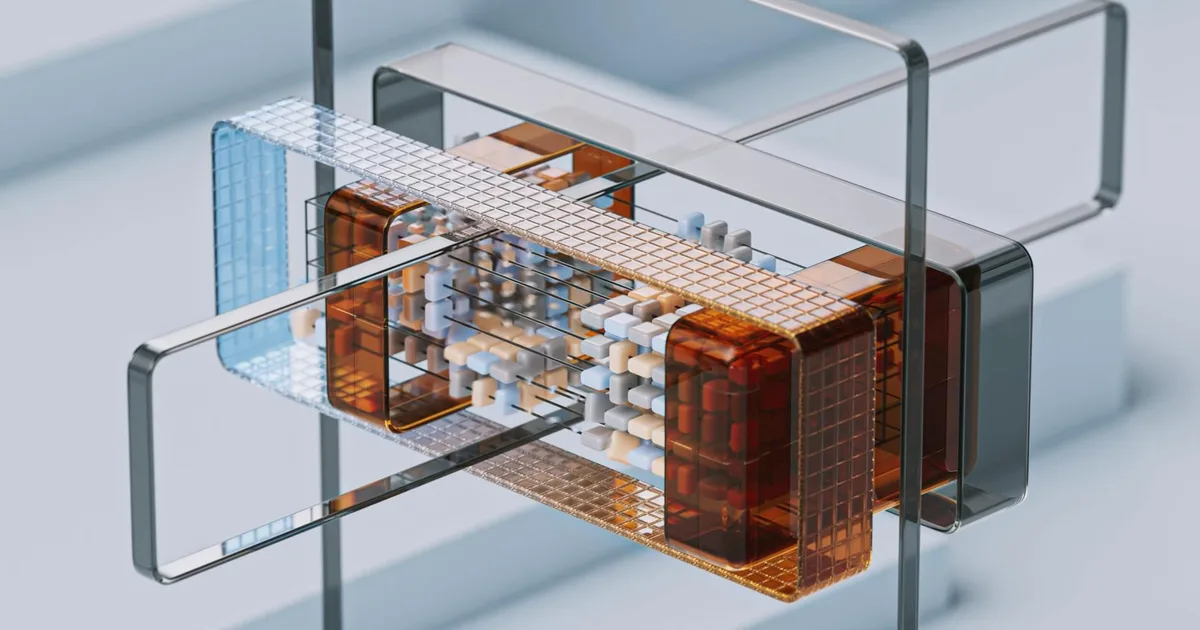

AI-Driven FinOps GitOps Architecture for SaaS Release Automation

Our strategy at Apex Logic hinges on an AI-driven FinOps GitOps architecture. This integrated approach leverages the principles of GitOps for declarative infrastructure and policy management, augmented by AI-driven insights for both responsible AI alignment and proactive FinOps cost optimization across our entire SaaS release automation pipeline.

Core Tenets: GitOps as the Control Plane

At the heart of this architecture is GitOps. All configurations – Infrastructure as Code (IaC), Policy as Code (PaC), Model as Code (MaC), and application code – reside in version-controlled Git repositories. This single source of truth ensures consistency, auditability, and rollback capabilities for every aspect of our SaaS deployments. For multimodal AI, this means model definitions, training parameters, inference configurations, and even data provenance metadata are all managed declaratively through Git. Pull requests become the mechanism for all changes, triggering automated CI/CD pipelines that enforce policies and deploy artifacts.

AI-Driven Observability and Policy Enforcement

Integrating AI-driven observability is crucial. We deploy specialized machine learning models to continuously monitor the outputs and behaviors of our production multimodal AI systems. These AI monitors detect drifts in performance, identify potential biases, flag anomalous outputs, and verify adherence to pre-defined responsible AI policies. For instance, an AI-driven system might analyze sentiment and content generated by a multimodal model, comparing it against a baseline of ethical guidelines. These observations feed back into our GitOps workflows, potentially triggering automated policy adjustments or human intervention. Policy enforcement, often orchestrated by tools like Open Policy Agent (OPA) or Kyverno, is triggered by every Git commit and deployment event, ensuring that no change bypasses our defined responsible AI and security guardrails.

FinOps Integration: Real-time Cost Governance

The FinOps component is equally critical for Apex Logic. As multimodal AI models are resource-intensive, optimizing cloud spend is paramount. Our AI-driven FinOps GitOps architecture integrates real-time cost observability and governance directly into the development lifecycle. AI models analyze cloud resource usage patterns, predict future costs, detect anomalies (e.g., unexpected spikes in GPU utilization for an inference endpoint), and recommend optimizations. This includes automated cost tagging, rightsizing suggestions for Kubernetes pods hosting multimodal AI services, and even dynamic scaling policies informed by cost-performance trade-offs. These recommendations are integrated into CI/CD, allowing engineers to make cost-aware decisions before deployment, with policies enforced via GitOps.

Architectural Blueprint

The cohesive AI-driven FinOps GitOps architecture for Apex Logic comprises several key components:

- Git Repositories: Centralized source for IaC (Terraform, Pulumi), PaC (OPA Rego policies), MaC (model definitions, training scripts, artifact metadata), and application code.

- CI/CD Pipelines (e.g., ArgoCD, GitLab CI): Orchestrate builds, tests, policy checks, and deployments, driven by Git events. ArgoCD, in particular, is ideal for GitOps-style continuous delivery to Kubernetes clusters.

- Policy Engines (e.g., OPA, Kyverno): Enforce declarative policies on infrastructure, deployments, and model configurations, ensuring responsible AI and security compliance.

- AI-Driven Observability Platform: Collects metrics, logs, and traces from multimodal AI services. Custom ML services analyze this data for performance drift, bias detection, fairness metrics, and anomaly detection. Integrates with Prometheus/Grafana for visualization.

- Cost Management Platforms: Cloud provider native tools (AWS Cost Explorer, Azure Cost Management) augmented by dedicated FinOps platforms (e.g., CloudHealth, Apptio Cloudability) for granular cost allocation and optimization recommendations.

- Multimodal AI Serving Infrastructure: Kubernetes clusters optimized with GPUs/TPUs, managed services (e.g., SageMaker, Vertex AI) for efficient inference and model lifecycle management.

- Feedback Loops: Automated alerts, policy violation reports, cost optimization suggestions, and responsible AI insights fed back to developers and stakeholders, closing the loop for continuous improvement.

Operationalizing Responsible AI and Cost Controls

The true power of this architecture lies in its ability to operationalize complex requirements through automation and policy.

Implementing Responsible AI Gates in GitOps Workflows

Every proposed change, whether a new multimodal AI model version or an infrastructure update, passes through automated responsible AI gates. These gates are defined as policies-as-code and enforced within the CI/CD pipeline. For instance, before a new multimodal AI model can be deployed, automated tests verify its fairness metrics across demographic groups, detect potential biases in generated outputs, and ensure compliance with data privacy regulations. These checks are integrated as mandatory stages in the Git pull request workflow.

Consider a policy for deploying a new multimodal AI service that generates marketing copy and images. An OPA policy might look like this:

package saas.ai.deployment.policy

deny[msg] {

input.request.kind.kind == "Deployment"

input.request.object.metadata.labels.app == "multimodal-content-gen"

not input.request.object.metadata.labels["ai.apexlogic.com/responsible-ai-certified"] == "true"

msg := "Deployment of multimodal-content-gen requires 'ai.apexlogic.com/responsible-ai-certified: true' label after passing automated responsible AI checks."

}

deny[msg] {

input.request.kind.kind == "Deployment"

input.request.object.metadata.labels.app == "multimodal-content-gen"

input.request.object.spec.template.spec.containers[_].env[_].name == "MAX_INFERENCE_COST_PER_HOUR"

to_number(input.request.object.spec.template.spec.containers[_].env[_].value) > 100

msg := "Multimodal content generation service exceeds maximum allowed inference cost per hour."

}This Rego policy snippet demonstrates two critical checks: ensuring a responsible AI certification label is present (implying automated ethical checks passed) and enforcing a maximum inference cost per hour, directly linking responsible AI with FinOps considerations.

Proactive FinOps Automation for SaaS Release Automation

The AI-driven FinOps component ensures that cost considerations are baked into every stage of SaaS release automation. Our AI models predict resource needs based on historical usage and anticipated demand for new multimodal AI features. This allows for dynamic resource provisioning, automatically adjusting infrastructure to meet performance targets within defined cost envelopes. Automated rightsizing suggestions for compute and storage (especially for large multimodal AI models and their data) are integrated into pull requests, allowing engineers to see the cost impact of their changes before merging. This proactive approach minimizes waste and prevents unexpected cloud bills, directly impacting profitability.

Verifiable Audit Trails and Reporting

Every decision, every policy enforcement, and every deployed artifact is versioned and auditable within Git. This provides a complete, verifiable audit trail of all changes to infrastructure, policies, and multimodal AI models. Dedicated dashboards, powered by the AI-driven observability platform, provide real-time insights into responsible AI metrics (e.g., bias scores, fairness gaps) and FinOps KPIs (e.g., cost per transaction, resource utilization). This transparency is crucial for internal governance, external compliance, and building customer trust in Apex Logic's responsible AI commitments.

Engineering Productivity and Future Outlook

Beyond compliance and cost, this AI-driven FinOps GitOps architecture significantly enhances engineering productivity, a key strategic advantage for Apex Logic in 2026.

Boosting Engineering Productivity

By automating compliance checks, cost optimizations, and deployment processes, engineers are freed from repetitive, manual tasks. They receive faster feedback on their changes, allowing them to iterate more quickly and focus on innovation rather than administrative overhead. The standardized, declarative nature of GitOps reduces cognitive load and onboarding time, making teams more efficient and reducing errors. This translates directly into faster time-to-market for new multimodal AI features and a more agile response to evolving customer needs.

Trade-offs and Challenges

Implementing such a sophisticated architecture is not without its challenges. The initial setup complexity, integrating disparate tools, and cultivating a cultural shift towards `everything-as-code` require significant investment. Managing the vast data volumes and computational demands for monitoring complex multimodal AI models can be resource-intensive. Furthermore, the definition of 'responsible AI' is continuously evolving, requiring constant adaptation of policies and monitoring mechanisms. Integration headaches between cloud-native tools, open-source solutions, and custom AI-driven services can also pose hurdles.

Failure Modes and Mitigation Strategies

- Policy Drift: Policies-as-code can become outdated or misaligned with evolving regulatory landscapes or `responsible AI` best practices. Mitigation: Regular, automated policy validation against external standards, scheduled policy review cycles, and `AI-driven` analysis of policy effectiveness.

- AI Model Drift (Responsible AI Alignment): Production `multimodal AI` models can drift in performance or exhibit emergent biases not present during initial testing. Mitigation: Robust MLOps practices, continuous `AI-driven` monitoring for performance degradation and fairness metrics, automated alerts, and rapid retraining/redeployment pipelines.

- Cost Overruns Despite FinOps: Inadequate tagging, misconfigured policies, or unforeseen usage spikes can lead to unexpected costs. Mitigation: Granular cost tagging enforcement via policies, real-time `AI-driven` anomaly detection with immediate alerts, and a clear escalation matrix for human `FinOps` review.

- Alert Fatigue: Overly aggressive `AI-driven` monitoring or policy engines can generate excessive alerts, leading to engineers ignoring critical warnings. Mitigation: Intelligent alert aggregation, prioritization based on severity and impact, and continuous tuning of `AI-driven` monitoring thresholds.

Source Signals

- Gartner: Predicts that by 2026, 80% of organizations will have established AI governance frameworks, with a strong emphasis on `responsible AI` and trustworthiness.

- FinOps Foundation: Reports that organizations effectively implementing `FinOps` principles achieve 20-30% cloud cost savings annually, critical for `SaaS` profitability.

- OpenAI: Emphasizes the paramount importance of `multimodal AI` safety research and proactive alignment techniques to mitigate societal risks.

- IDC: Forecasts accelerated `GitOps` adoption, projecting over 75% of enterprises to use `GitOps` for containerized application deployment by 2027, streamlining `SaaS release automation`.

Technical FAQ

Q1: How does this architecture handle diverse multimodal AI models (vision, NLP, audio)?

The `AI-driven FinOps GitOps architecture` is designed for modularity. Each `multimodal AI` model (e.g., a vision model for object detection, an NLP model for text summarization, an audio model for transcription) is treated as a distinct artifact within the `MaC` (Model as Code) repository. `GitOps` ensures their declarative deployment. The `AI-driven` observability platform employs specialized monitoring agents and ML models tailored to each modality. For instance, image models might have specific metrics for object detection accuracy and fairness across image characteristics, while NLP models are monitored for sentiment drift and toxic output generation. The policy engine (`OPA`) can apply different `responsible AI` and `FinOps` policies based on the model's modality, criticality, and resource consumption profile.

Q2: What's the role of explainable AI (XAI) in verifiable responsible AI alignment within this framework?

Explainable AI (XAI) is integral to `verifiable responsible AI alignment`. Within our framework, XAI techniques are applied both during model development (pre-deployment) and in production (post-deployment monitoring). During CI/CD, XAI tools generate explanations for model decisions, which are then analyzed by `AI-driven` policy checks to identify potential biases or illogical reasoning. In production, XAI provides insights into anomalous `multimodal AI` behavior detected by the observability platform. For instance, if a bias is detected, XAI can help pinpoint which input features or modalities contributed most to the biased output, facilitating faster debugging and model remediation. These XAI insights are captured in the `verifiable` audit trail, enhancing transparency and trust.

Q3: How do you prevent AI-driven FinOps from becoming an opaque black box?

Preventing `AI-driven FinOps` from becoming an opaque black box is achieved through several mechanisms. Firstly, all `FinOps` policies are defined as code in Git, making them transparent and auditable. Secondly, the `AI-driven` recommendations and anomaly detections are not solely automated; they generate clear, actionable insights and alerts that explain *why* a particular optimization is suggested or *what* anomaly was detected. These insights are presented in dedicated `FinOps` dashboards with drill-down capabilities. Furthermore, `FinOps` is inherently a cultural practice involving collaboration between engineering, finance, and operations. Regular reviews and feedback loops ensure that the `AI-driven` systems are continuously tuned and understood by all stakeholders, fostering trust and preventing a 'black box' perception.

Comments