Related: 2026: Apex Logic's Blueprint for AI-Driven Green FinOps & GitOps in Serverless

The Imperative for AI-Driven Platform Engineering in 2026

As we navigate 2026, the complexity of managing modern enterprise cloud-native and hybrid environments has reached an inflection point. Traditional infrastructure management approaches, even those augmented by basic automation, are struggling to keep pace with the velocity of innovation, the scale of operations, and the ever-evolving threat landscape. The demand for accelerated innovation, coupled with stringent requirements for cost efficiency, security, and resilience, necessitates a paradigm shift. At Apex Logic, we recognize that this shift is unequivocally towards AI-driven platform engineering — a holistic strategy that embeds artificial intelligence across the entire infrastructure lifecycle, from provisioning to optimization and security.

This isn't merely about AIOps as a reactive monitoring layer; it's about architecting a proactive, intelligent platform that learns, adapts, and self-optimizes. The goal is to transform infrastructure from a cost center and bottleneck into an agile, secure, and highly productive engine for the business. Our focus for 2026 is on leveraging advanced AI capabilities to enhance critical practices: FinOps for granular cost control, GitOps for declarative and consistent deployments, robust supply chain security as a foundational element, and intelligent release automation to dramatically improve engineering productivity.

The Evolving Enterprise Landscape

Enterprises today operate across heterogeneous environments — public clouds, private clouds, edge computing, and on-premises data centers. Each layer introduces its own set of challenges related to configuration management, compliance, resource utilization, and security vulnerabilities. Manually configuring and monitoring these distributed systems is not only error-prone but also prohibitively expensive and slow. AI-driven platform engineering offers the promise of abstracting away this complexity, providing a unified operational model that intelligently manages resources, enforces policies, and predicts potential issues before they impact services.

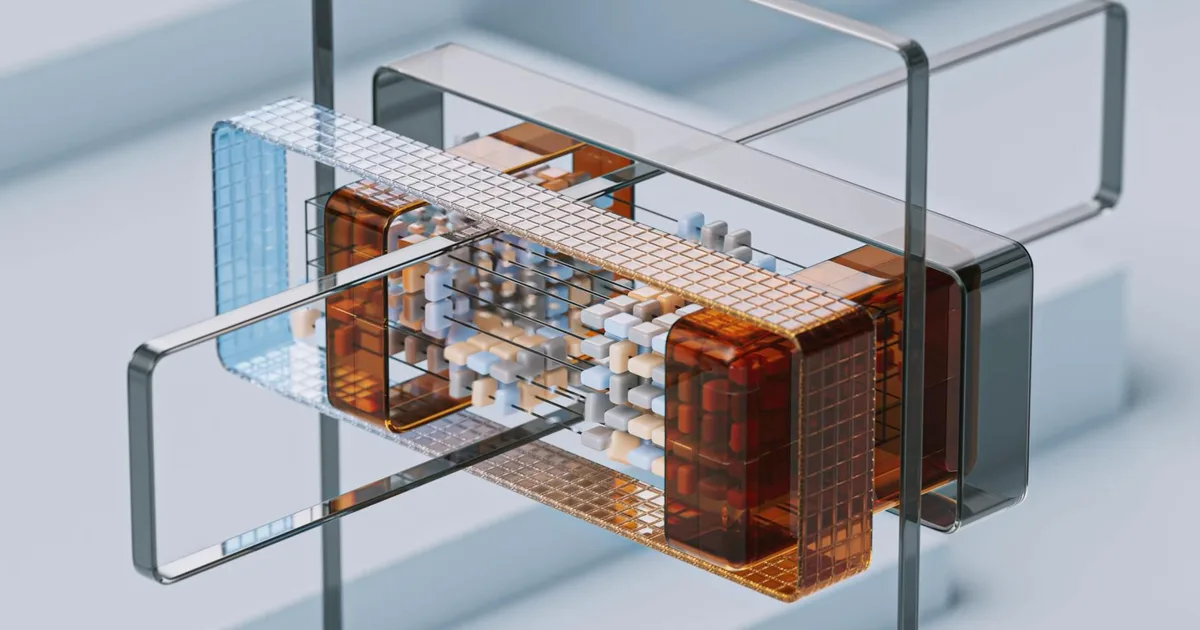

Architectural Blueprint for an Adaptive AI-Driven Platform

An effective AI-driven platform engineering architecture for 2026 must be modular, scalable, and inherently intelligent. It integrates several layers, each playing a crucial role in data ingestion, processing, intelligence generation, and automated action. Our proposed architecture at Apex Logic emphasizes a federated control plane augmented by a centralized intelligence plane.

Core Components and Data Flow

At its foundation, the platform relies on robust data ingestion from every conceivable source: logs, metrics, traces (OpenTelemetry), configuration data, security events, and financial usage reports. This raw data feeds into a unified data lake/mesh, where it is cleansed, normalized, and contextualized. Key components include:

- Observability & Telemetry Fabric: Collects real-time operational data from Kubernetes clusters, virtual machines, serverless functions, network devices, and applications.

- Policy Enforcement Engine: Utilizes frameworks like Open Policy Agent (OPA) or Kyverno to enforce security, compliance, and operational policies across the entire infrastructure lifecycle, from CI/CD to runtime.

- GitOps Control Plane: Manages infrastructure and application deployments declaratively via Git repositories (e.g., ArgoCD, Flux). This ensures a single source of truth and auditability.

- Infrastructure as Code (IaC) & Configuration Management: Tools like Terraform, Pulumi, Ansible for provisioning and configuring resources consistently.

- Service Mesh & API Gateway: For managing microservices communication, traffic routing, and security policies.

AI/ML Integration Layer: Models and Orchestration

The true differentiator of an AI-driven platform lies in its intelligence layer. This layer consumes the contextualized data and applies advanced machine learning models to derive actionable insights. We advocate for a hybrid approach utilizing both proprietary and open-source AI models, carefully managed through MLOps pipelines.

- Anomaly Detection & Predictive Analytics: Leveraging time-series analysis, clustering, and deep learning models to identify deviations in performance, cost, or security posture, and predict future incidents.

- Resource Optimization Engines: AI models that analyze usage patterns and recommend or automatically implement right-sizing for VMs, containers, and serverless functions, optimizing for both performance and cost (crucial for FinOps).

- Security Posture Management & Threat Intelligence: Multimodal AI models that correlate disparate security signals (logs, network flows, vulnerability scans) to detect sophisticated threats, identify misconfigurations, and assess overall supply chain security risks.

- Natural Language Processing (NLP): For intelligent incident correlation, root cause analysis assistance, and conversational interfaces for platform interaction.

The orchestration of these models is critical, ensuring they operate in concert, share insights, and trigger appropriate automated responses via the control plane.

Trade-offs: Centralization vs. Federation

Architecting an AI-driven platform involves crucial trade-offs. A highly centralized model offers simplified management and global visibility but risks creating a single point of failure and can struggle with data locality and compliance in highly distributed enterprises. A federated model, where domain-specific platforms operate autonomously but report to a central AI intelligence layer, offers greater resilience and agility. However, it introduces complexities in maintaining consistency, data aggregation, and global policy enforcement. For most large enterprises in 2026, a hybrid federated approach with strong central governance and AI-driven aggregation is optimal, allowing teams autonomy while benefiting from enterprise-wide intelligence.

Integrating Core Pillars: FinOps, GitOps, and Supply Chain Security

The power of AI-driven platform engineering is truly unleashed when it actively augments and automates critical operational practices. At Apex Logic, we see these three pillars as non-negotiable for enterprise success.

FinOps with AI-Driven Cost Optimization

FinOps is no longer merely about cost reporting; it's about real-time, actionable financial intelligence. AI algorithms can analyze cloud billing data, resource utilization metrics, and application performance data to identify cost anomalies, recommend optimal resource sizing (e.g., instance types, storage tiers, serverless function memory), and predict future spend with high accuracy. This allows engineering and finance teams to collaborate on cost efficiency without sacrificing performance or availability.

For instance, an AI model might detect that a specific Kubernetes deployment consistently underutilizes its requested CPU/memory by 70% during off-peak hours and automatically suggest a lower resource allocation via a Git pull request, or even initiate a rightsizing action if policies allow. This proactive approach is a cornerstone of effective FinOps in 2026, directly contributing to bottom-line savings.

GitOps for Declarative Infrastructure and Application Delivery

GitOps provides the foundational framework for declarative infrastructure and application management. By using Git as the single source of truth for desired state, it ensures consistency, auditability, and rapid recovery. AI enhances GitOps by:

- Intelligent Drift Detection: AI models can learn the expected state and behavior of infrastructure and applications. When deviations occur outside of Git-defined configurations, AI can flag them, differentiate between benign and malicious changes, and even suggest automated remediation pull requests.

- Policy as Code Automation: Integrating AI with policy engines (like OPA) within a GitOps workflow allows for dynamic policy generation or refinement based on observed patterns. For example, an AI might recommend a new security policy based on a detected attack vector or a change in compliance requirements.

Here's a simplified OPA policy example, enforced via GitOps, that an AI could potentially help generate or refine by identifying common misconfigurations:

package kubernetes.admission

deny[msg] {

input.request.kind.kind == "Pod"

image := input.request.object.spec.containers[_].image

not startswith(image, "myregistry.com/")

msg := "Images must originate from 'myregistry.com/'"

}

deny[msg] {

input.request.kind.kind == "Pod"

container := input.request.object.spec.containers[_]

not container.resources.limits.cpu

msg := "Container '" + container.name + "' must specify CPU limits"

}This policy, managed in Git, ensures that all container images come from an approved registry and have CPU limits defined, preventing common security and performance pitfalls. AI can analyze historical deployments and identify patterns of non-compliance to suggest such policies.

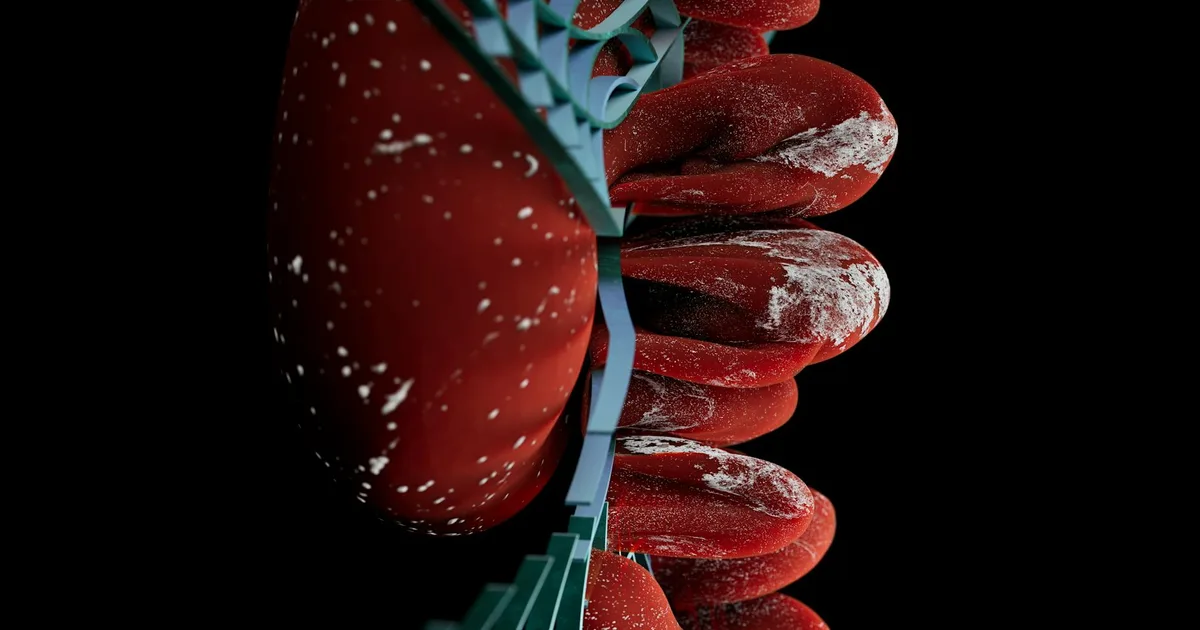

Fortifying Supply Chain Security

The software supply chain remains a primary attack vector. AI-driven platform engineering provides comprehensive solutions for supply chain security by integrating security checks throughout the entire software development lifecycle (SDLC), from code commit to runtime. Key AI applications include:

- Automated Vulnerability Scanning & Dependency Analysis: AI can prioritize vulnerabilities based on their exploitability and potential impact, reducing alert fatigue. It can also analyze transitive dependencies for hidden risks.

- Behavioral Anomaly Detection in CI/CD: Multimodal AI models monitor CI/CD pipelines for unusual access patterns, unauthorized modifications to build artifacts, or anomalous build times, indicating potential tampering.

- SBOM Generation & Verification: Automating the creation and continuous verification of Software Bill of Materials (SBOMs) to ensure components are legitimate and untampered.

- Runtime Attestation: AI continuously verifies the integrity of running workloads against their declared SBOMs and expected behaviors, immediately flagging any deviations.

This proactive, AI-augmented approach to security is indispensable for protecting enterprise assets in 2026.

Operationalizing AI: Release Automation and Failure Modes

The ultimate goal of architecting an AI-driven platform is to accelerate engineering productivity and achieve highly reliable release automation.

Accelerating Release Automation and SDLC

AI can infuse intelligence into every stage of the release process:

- Intelligent Test Orchestration: AI analyzes code changes and historical test results to prioritize and select the most relevant test cases, reducing testing time while maintaining coverage.

- Automated Canary Deployments & Rollbacks: AI monitors key performance indicators (KPIs) and error rates during staged rollouts. If performance degrades or anomalies are detected, AI can automatically trigger a rollback, minimizing user impact.

- Predictive Performance Baselines: AI establishes dynamic performance baselines for applications. During a new release, it can immediately detect regressions or unexpected performance shifts that human eyes might miss.

- Root Cause Analysis (RCA) Assistance: Post-incident, AI can rapidly correlate logs, metrics, and traces across distributed systems to identify potential root causes, significantly reducing Mean Time To Resolution (MTTR).

This level of intelligent automation transforms the SDLC, allowing teams to deploy faster, more safely, and with higher confidence, directly boosting engineering productivity.

Mitigating Failure Modes and Ensuring Resilience

While powerful, AI-driven systems introduce their own set of failure modes that must be meticulously addressed:

- Data Quality & Bias: AI models are only as good as the data they're trained on. Biased or poor-quality data can lead to incorrect decisions, resource misallocations, or security blind spots. Robust data governance, cleansing, and continuous monitoring of data pipelines are essential.

- Model Drift: As operational environments evolve, AI models can become stale and lose accuracy. Continuous retraining, A/B testing of models, and MLOps practices for model lifecycle management are critical to combat drift.

- "Black Box" Decisions & Explainability: Complex multimodal AI models can make decisions that are difficult for humans to interpret or audit. Implementing Explainable AI (XAI) techniques is crucial for building trust and enabling engineers to understand and debug automated actions.

- Alert Fatigue (AI-Generated): Poorly tuned AI can generate an overwhelming number of low-fidelity alerts. Intelligent alert correlation, anomaly scoring, and human-in-the-loop validation mechanisms are necessary.

- Integration Complexity: Integrating disparate AI components and data sources across a heterogeneous enterprise environment is challenging. Emphasizing open standards, API-first design, and robust integration testing is key.

At Apex Logic, we emphasize a "human-in-the-loop" approach, where critical AI-driven actions require human approval, especially in early adoption phases. This builds confidence and allows for continuous learning and refinement of the AI's decision-making capabilities.

Source Signals

- Gartner: Predicts that by 2026, 80% of enterprises will have established platform engineering teams, up from 45% in 2023, reflecting the growing adoption of this approach for enhancing developer experience and operational efficiency.

- Cloud Native Computing Foundation (CNCF): Highlights the increasing adoption of GitOps as a core practice for managing cloud-native infrastructure, with over 70% of organizations using Git for infrastructure configuration in their latest surveys.

- NIST: Continues to publish extensive guidelines (e.g., SSDF) emphasizing the critical need for software supply chain security, with a focus on automated tools and processes to identify and mitigate vulnerabilities across the SDLC.

- FinOps Foundation: Reports significant cost savings (often 20-30% within the first year) for organizations that implement mature FinOps practices, especially those leveraging automation and data analytics for optimization.

Technical FAQ

Q1: How does AI-driven platform engineering differ from traditional AIOps?

A1: Traditional AIOps primarily focuses on applying AI to operational data for anomaly detection, root cause analysis, and predictive insights, often as a separate layer. AI-driven platform engineering, as envisioned for 2026, embeds AI directly into the platform's core functionalities — provisioning, policy enforcement, security, and release — making it an inherent part of the control and data planes, not just an observational tool. It's about AI actively driving the platform's behavior and optimization.

Q2: What is the role of open-source AI in this architecture, given enterprise security concerns?

A2: Open-source AI plays a pivotal role, offering transparency, flexibility, and a vibrant community for innovation. Enterprises can leverage established frameworks (e.g., TensorFlow, PyTorch, Hugging Face models) for building custom intelligence. Security concerns are mitigated by rigorous MLOps practices: secure model training environments, vulnerability scanning of model dependencies, careful selection of trusted open-source components, and often, fine-tuning or deploying these models within private, controlled environments. The transparency of open-source AI can even aid in explainability and auditability.

Q3: How do we ensure that AI-driven automation doesn't lead to unintended consequences or 'runaway' systems?

A3: Preventing 'runaway' AI systems requires a multi-faceted approach. First, implement a robust human-in-the-loop mechanism, especially for critical or irreversible actions, allowing engineers to review and approve AI recommendations. Second, establish clear guardrails and policy-as-code rules that define the boundaries of AI's autonomy. Third, invest heavily in comprehensive observability and monitoring of the AI itself — tracking model performance, decision outcomes, and system impacts. Finally, implement circuit breakers and automated rollback mechanisms, ensuring that any negative impact from an AI-driven action can be immediately reversed.

Comments